Quick Summary

- What it is: LangChain is an open-source Python framework for building LLM-powered applications. It connects large language models to memory, data sources, tools, and external APIs.

- Created by: Harrison Chase, October 2022

- Why it matters: Raw LLMs have no memory, no data access, and no ability to take real-world actions. LangChain solves all three.

- Best for: Developers and AI engineers exploring LLM application development in Python

What is LangChain?

LangChain is an open-source framework that makes it easier to build applications using large language models.

When you use a raw LLM like GPT or Claude directly, you can send it a prompt and get a response. That is useful, but limited. The model has no memory of previous conversations. It cannot access your company's data. It cannot call external APIs. It cannot take actions in the real world.

LangChain solves all of this. It acts as the connective layer between an LLM and everything else an application needs. Databases, APIs, documents, memory, tools, and multiple agents working together.

Think of LangChain as the plumbing that makes LLMs actually useful in production.

It was created by Harrison Chase and launched in October 2022. Within eight months it became the fastest-growing open-source project on GitHub. As of 2026 it is the most widely used framework for building LLM-powered applications.

LangChain is available in both Python and JavaScript. For AI and ML development in India, Python is the standard.

Why Do Developers Use LangChain?

Raw LLM APIs have three fundamental limitations that LangChain addresses directly.

- No memory: Every API call to an LLM starts from scratch. If you are building a chatbot, you have to manually pass the entire conversation history with every new message. This gets messy fast. LangChain provides memory modules that handle this automatically.

- No access to external data: LLMs are trained on data up to a cutoff date. They cannot read your internal documents, query your database, or access live information unless you provide it. LangChain makes it straightforward to connect an LLM to any data source and give it context at runtime.

- No ability to take actions: A plain LLM produces text. It cannot search the web, run code, call an API, or interact with external systems. LangChain gives LLMs access to tools that let them do all of this.

Beyond solving these limitations, LangChain saves developers enormous amounts of time. Instead of writing custom integration code from scratch for every LLM, every database, and every API, LangChain provides standardized components that work together out of the box.

Core Components of LangChain

Understanding LangChain's architecture comes down to six core components. Each one addresses a specific challenge in building LLM-powered applications.

1. Models

LangChain provides a standard interface for working with virtually any LLM. This means you can swap between GPT, Claude, Gemini, Mistral, Llama, or any open-source model with minimal code changes.

This is one of LangChain's most practical advantages. You are not locked into a single model provider. You can switch models, compare outputs, or use different models for different parts of the same application.

2. Prompt Templates

Prompts are the instructions you give to an LLM. In production applications, prompts are rarely static. They include variables, user inputs, retrieved documents, and formatting instructions.

LangChain's PromptTemplate class lets you define reusable prompt structures with placeholders. You fill in the placeholders at runtime based on the user's input or other context. This makes prompt management cleaner, testable, and easier to maintain.

3. Chains

Chains are the core of LangChain's workflow logic. A chain connects multiple components together in a sequence.

A simple chain might be: take a user's question, format it into a prompt template, send it to an LLM, and parse the response. A more complex chain might retrieve relevant documents first, then include those documents in the prompt, then send it to the model.

The name LangChain itself comes from this idea of chaining components together into a workflow.

4. Memory

Memory modules let your application remember previous interactions.

LangChain offers several types of memory. Conversation buffer memory stores the full conversation history. Summary memory compresses older parts of the conversation to save tokens. Entity memory tracks specific pieces of information mentioned across the conversation.

For any application where context matters across turns, like a customer support bot or a personal assistant, memory is essential.

5. Agents

AI Agents are LLM-powered components that decide what action to take based on user input and available tools. Instead of following a fixed workflow, an agent reasons about what to do next.

An agent might decide to search the web, query a database, run a calculation, or call an API based on what the user is asking. It selects the right tool for each step and executes a plan autonomously.

This is the component that connects LangChain to agentic AI. Agents architecture built with LangChain can act with minimal human direction across multi-step workflows.

6. Tools and Tool Integration

Tools are the capabilities you give to your agents. LangChain has built-in integrations for hundreds of tools including web search, code interpreters, SQL databases, vector stores, email, calendars, and third-party APIs.

You can also build custom tools. If your company has an internal API that the agent needs to call, you define it as a tool and the agent learns when and how to use it.

What is RAG and How Does LangChain Enable It?

RAG stands for Retrieval-Augmented Generation. It is one of the most important patterns in LLM application development and LangChain is the most common framework for building RAG pipelines.

Here is the problem RAG solves. An LLM trained on public data knows nothing about your internal documents, your product catalog, your customer data, or anything proprietary. If you want the LLM to answer questions about that data, you have two options. Fine-tune the model on your data, which is expensive and slow. Or use RAG, which is faster, cheaper, and more flexible.

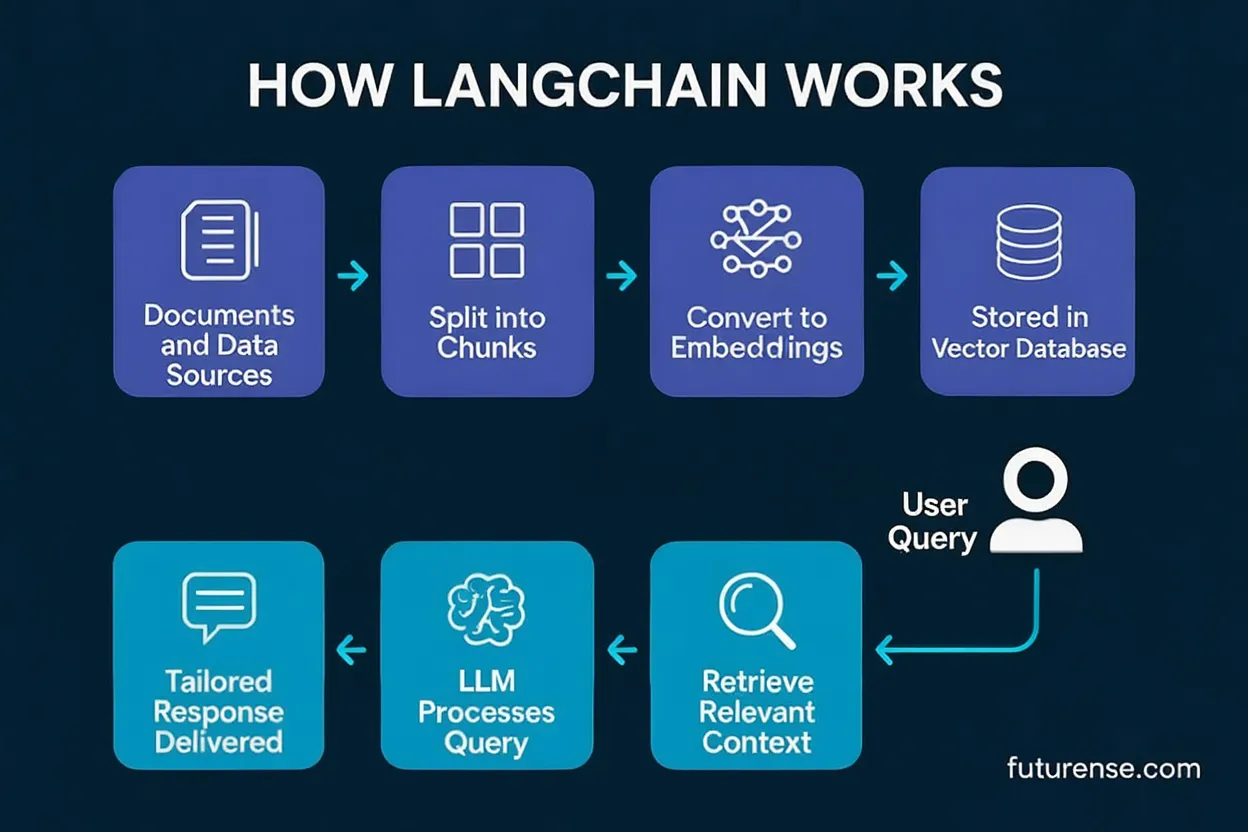

RAG works in four steps.

- Your documents are split into smaller chunks and converted into vector embeddings, which are numerical representations of meaning. These embeddings are stored in a vector database like Pinecone, FAISS, or Chroma.

- When a user asks a question, that question is also converted into an embedding.

- The system searches the vector database for document chunks whose embeddings are semantically similar to the question.

- Those relevant chunks are included in the prompt sent to the LLM, giving it the context it needs to answer accurately.

LangChain handles the entire pipeline: document loading, chunking, embedding, vector store integration, retrieval, and prompt construction. What would take weeks to build from scratch takes days with LangChain.

Real-World Use Cases of LangChain

Document Question Answering

Upload a 200-page policy document, annual report, or legal contract and ask questions in natural language. LangChain retrieves the relevant sections and the LLM answers based on actual document content. Companies use this for internal knowledge bases, compliance tools, and customer support.

Conversational Chatbots with Memory

Standard chatbots forget everything between sessions. LangChain-powered chatbots maintain context, remember user preferences, and have genuinely coherent multi-turn conversations. Used widely in e-commerce, banking, and SaaS products.

Agentic Workflows

Build autonomous agents that can search the web, query databases, run code, send emails, and coordinate with other agents to complete complex multi-step tasks. This is the foundation of agentic AI applications being deployed at companies like Flipkart, Razorpay, and global GCCs in India.

Code Generation and Review

Developers use LangChain to build tools that generate boilerplate code, explain existing code, write tests, and review pull requests automatically. Tools like this are already in production at engineering teams across India.

Summarization Pipelines

Automatically summarize research papers, earnings reports, news articles, or support tickets. LangChain handles chunking, retrieval, and summarization logic in a clean pipeline.

AI-Powered Search

Replace keyword-based search with semantic search that understands intent. A user searching for "affordable smartphones with good camera" gets results based on meaning, not just keyword matching.

LangChain vs LlamaIndex: What is the Difference?

Both LangChain and LlamaIndex are popular LLM frameworks and beginners often confuse them.

In practice, many teams use both. LlamaIndex handles the data and retrieval layer. LangChain handles the agent logic and application orchestration. They complement each other rather than competing.

LangChain in India: Job Market and Career Scope

LangChain is no longer just a framework developers experiment with. It is a production requirement for AI engineering roles across India.

As of April 2026, Naukri lists over 3,300 active job postings in India that mention LangChain. Glassdoor shows more than 800 roles specifically requiring LangChain proficiency. Most of these roles are at product companies, fintech firms, and Global Capability Centres in Bangalore, Hyderabad, Mumbai, and Pune.

What these job descriptions typically require alongside LangChain:

- Python proficiency (mandatory in almost every posting)

- Experience with LLM APIs like OpenAI, Anthropic, or Hugging Face

- Hands-on RAG pipeline development

- Vector database experience with Pinecone, FAISS, or Chroma

- Familiarity with LangGraph for agentic workflows

- Docker and cloud deployment basics

Salary ranges for LangChain-relevant roles in India (2026):

Engineers who specialize in LangChain-based agentic systems and RAG pipelines earn a premium of 20% to 35% above general AI engineering roles at the same experience level.

How to Get Started with LangChain

LangChain is a Python-first framework. Before starting, you need basic Python knowledge, familiarity with APIs, and a working development environment.

Here is a practical path to building LangChain skills.

- Step 1: Set up your environment Install Python, set up a virtual environment, and install LangChain using pip. Get an API key from OpenAI or use a free open-source model through Hugging Face.

- Step 2: Build your first chain Start with a simple prompt template and LLM chain. Take a user question, format it, send it to a model, and print the response. This takes about 30 minutes and teaches you the core pattern.

- Step 3: Add memory Build a chatbot that remembers the conversation. Add a conversation buffer memory module and test multi-turn interactions. This is where LangChain starts feeling genuinely powerful.

- Step 4: Build a RAG pipeline Load a PDF or set of documents, split them into chunks, embed them into a vector store, and build a question-answering chain over them. This is the most in-demand LangChain skill in the Indian job market right now.

- Step 5: Build an agent with tools Give your LLM access to at least two tools, web search and a custom function. Watch it reason about which tool to use and execute a multi-step task. This is the bridge to agentic AI engineering.

- Step 6: Deploy it Wrap your application in a FastAPI endpoint, containerize it with Docker, and deploy it to a cloud platform. A deployed project is worth ten times a Jupyter notebook on your resume.

LangChain and LangGraph: What is the Relationship?

LangGraph is an extension of LangChain built specifically for constructing stateful, multi-agent applications as graphs.

LangChain handles chains and basic agents well. But for complex agentic workflows where multiple agents need to coordinate, make conditional decisions, loop through tasks, and maintain shared state, LangGraph provides more fine-grained control.

The LangChain team built LangGraph as the foundation for production agentic systems. In 2026, most serious multi-agent applications in production use LangGraph under the hood.

If you are learning LangChain, plan to learn LangGraph next. They are complementary and most senior AI engineering roles expect familiarity with both.

TL;DR

- LangChain connects LLMs to memory, data, and tools making them production-ready

- Its six core components are Models, Prompt Templates, Chains, Memory, Agents, and Tools

- RAG is the most in-demand LangChain skill in India right now

- Over 3,300 active job postings mention LangChain in India as of April 2026

- Freshers with a strong LangChain portfolio can expect Rs.8 to Rs.15 LPA

- Learn LangGraph next as most senior roles now expect both

Frequently Asked Questions

What is LangChain in simple terms?

LangChain is a Python framework that makes large language models actually useful in production. A raw LLM can respond to a prompt but it has no memory, no access to your data, and no ability to take actions in the real world. LangChain solves all three by acting as the connective layer between an LLM and everything else an application needs including databases, APIs, documents, and external tools.

What does LangChain do exactly?

LangChain does three things. It gives LLMs memory so they can maintain context across a conversation. It connects LLMs to external data sources so they can answer questions based on your documents, databases, or live information rather than just their training data. And it gives LLMs access to tools so they can take real actions like calling APIs, running code, searching the web, or triggering workflows. Together these capabilities turn a basic language model into a production-ready AI application.

Is LangChain a Python library?

Yes, LangChain is primarily a Python library available as an open-source package you can install via pip. It is also available in JavaScript for web-based applications but Python is the standard for AI and ML development in India and globally. You need a solid foundation in Python before you can use LangChain effectively in production.

Does ChatGPT use LangChain?

No, ChatGPT does not use LangChain. ChatGPT is a product built directly by OpenAI on top of their own models and infrastructure. LangChain is a developer framework that lets you build your own AI applications using OpenAI models or any other LLM. Think of it this way: ChatGPT is a finished product for end users. LangChain is a toolkit for developers who want to build their own AI products using the same underlying models.

Is LangChain and n8n the same?

No, they are different tools that serve different purposes but are often used together. n8n is a workflow automation platform that connects apps and services through a visual interface, similar to Zapier. LangChain is a developer framework specifically built for creating LLM-powered applications with memory, data access, and tool use. Many production AI systems use both: LangChain handles the AI reasoning and agent logic while n8n handles the broader workflow automation and service integrations around it.

Is LangChain free?

LangChain itself is free and open-source under the MIT license. You do not pay to use the framework. However you will pay for the external services it connects to such as OpenAI's API for model calls or a managed vector database. You can keep costs low while learning by using open-source models and local vector stores.

%20in%20AI.png)